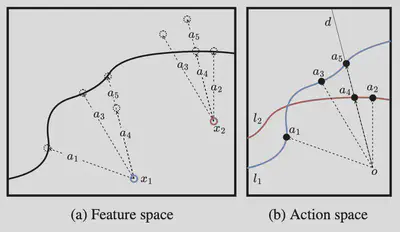

We propose a method for global explainability of black box models using counterfactual explanations. A counterfactual explanation locally explains an outcome by providing the minimal changes necessary to reverse the outcome, e.g., “if you had five more years of experience, your job application would have been accepted”. We develop a method, termed GLANCE, that summarizes all counterfactual explanations for a given model.

Solving this global version of counterfactual explainability is different than finding the local counterfatual explanations and picking among them.